Fragility By Design

Ideas developed from experience in operational resilience and ITDR.

By Steven Hine

What This Site Explores

Most organisations believe they are resilient because the right plans, controls, and tests exist on paper.

In practice, systems fail in ways no one expected — not because nothing was done, but because confidence quietly outpaced real capability.

This site explores that gap.

It examines operational resilience, continuity, and recovery through the lens of fragility — how exposure accumulates across dependencies, assumptions, and decisions long before disruption occurs.

This is not another framework or maturity model.

It is an attempt to understand where resilience actually breaks down — and why surprise persists even in well-prepared organisations.

Fragility By Design

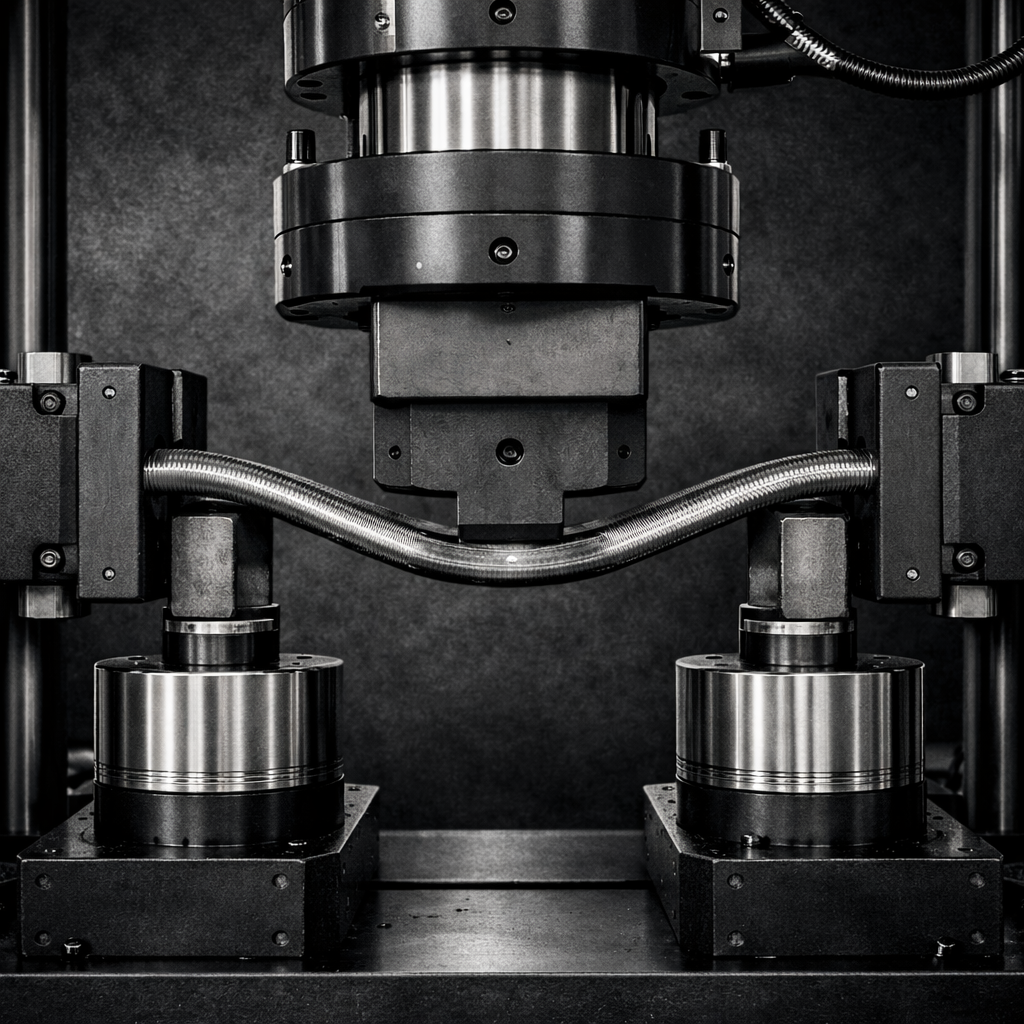

Exploring hidden fragility in complex operational systems.

IT DR & Continuity

Recovery, resilience testing, and real-world service behaviour under stress.

Severity is not a category. It’s a consequence.

When systems are placed under plausible stress, structural limits reveal themselves. Pre-defining severity narrows what testing is allowed to teach us.

Plausibility is the constraint.

Severity is the signal.

In certain market-adjacent activities – including execution, custody, clearing, collateral management and settlement – systems can remain technically available while conditions become progressively less stable. Trades continue to execute. Platforms respond. Processes function. From a narrow continuity perspective, the service appears intact.

Yet behaviour begins to shift.

Resilience often appears complete in documentation and dashboards.

But capability is only revealed when systems operate under stress.

The confidence gap forms in the quiet distance between assurance and behaviour.

Resilience failures rarely stem from missing controls. They emerge from hidden fragility within interacting dependencies. This paper explores the structural gap between confidence and capability — and why surprise persists even in well-prepared organisations.

Why tabletop exercises often confirm what we already believe — and how testing becomes rehearsal rather than discovery when assumptions go unchallenged.

Failover plans often exist. Testing schedules are agreed. Yet hesitation persists. This article explores why technical capability alone is not enough — and how organisational confidence, incentives, and perceived risk quietly shape whether recovery is ever truly exercised.